Identifying workloads to move to the cloud can be tricky. You have dozens or hundreds of apps running in your organization, and now that you’ve seen the operational efficiencies and agility available to you in the cloud, you’re tempted to move as many of them to the cloud as quickly as possible. As you’ll see in the examples below, cloud computing is indeed a good fit for many common workloads.

I firmly believe that infrastructure-as-a-service (IaaS) cloud is for every organization, but not for every application. The reality is that some applications just aren’t a good fit for the ephemeral and dynamic environment of the cloud. Still others have very specific environmental requirements that make them ill suited. Read on as I explore more about what you should consider before earmarking a workload for the cloud.

Three Quick Criteria for a Good Fit

While each application is unique, and it’s important to apply your own lens when evaluating your cloud strategy, there are some rules of thumb that should help identify applications that are winning choices for cloud:

Unpredictable load or potential for explosive growth: Whenever your app is public facing, it has the potential to be wildly popular. Social games, eCommerce sites, blogs and software-as-a-service (SaaS) products fall into this category. If you release the next Farmville™ and your traffic spikes, you can scale up and down in the cloud according to demand, avoiding a “success disaster” and never over-provisioning your infrastructure.

Partial utilization: When traffic fluctuates – say with daily cycles of playing or shopping, or with occasional, compute-intensive batch processing – you can spin up extra servers in the cloud during the peaks and spin them down afterwards.

Easy parallelization: Applications like media streaming can be scaled horizontally and are generally a good use case for the cloud, because they scale out rather than up.

Finally, keep in mind the ideal of cloud computing as a way of using multiple resource pools – public cloud, private cloud, hybrid, your internal data center – not choosing one over the others. RightScale lets you see and manage all of them through one interface with a single set of tools and best practices.

Three Ideal Cloud Workloads

I’m going to cover three workloads that (in a range of different variants) RightScale helps to deploy on the cloud on a regular basis – Scalable Web Apps, Batch Processing, and Disaster Recovery. I’m going to talk about the following aspects of each of these workloads:

- Characteristics

- How the cloud helps

- Architecture example

When I’m done with that I’ll cover some workloads that are not great candidates for IaaS cloud. This should help to arm you with the criteria for selecting applications from your portfolio, which can benefit from some “cloudification.”

1. Scalable Web Apps

Characteristics

Many Web apps have the potential for unpredictable spikes in traffic. Suddenly, you need to exponentially scale your infrastructure at an incredible pace to handle the extra load. If you can’t provision fast enough, or you’re running in a traditional data center environment with limited resources, you may be facing a “success disaster.” You’re getting more traffic than you’ve ever had before, but you can’t capitalize on it, or monetize it, because your infrastructure can’t keep up. Ideally your infrastructure is automated and can respond to these peaks without human intervention.

How the Cloud Helps

Cloud computing gives you access to unlimited resources on demand – limited only by cloud providers you’ve chosen – so as you experience these spikes in traffic, their entire resource pool is accessible to you. But because you don’t have to run all the infrastructure to cope with the peak load, you pay for only the resources that you use. Extra capacity costs you money only when you need it for spikes in traffic.

But to truly utilize these resources on demand, you need to be able to scale automatically. By monitoring the load of your application, RightScale determines the need to scale a tier of your infrastructure and automatically adds new resources to the pool to handle the additional load. RightScale gives you the automation tools and ease of operations to launch new servers easily and connect them to the rest of your deployment, including your load balancers, databases, and so forth.

Architecture Example

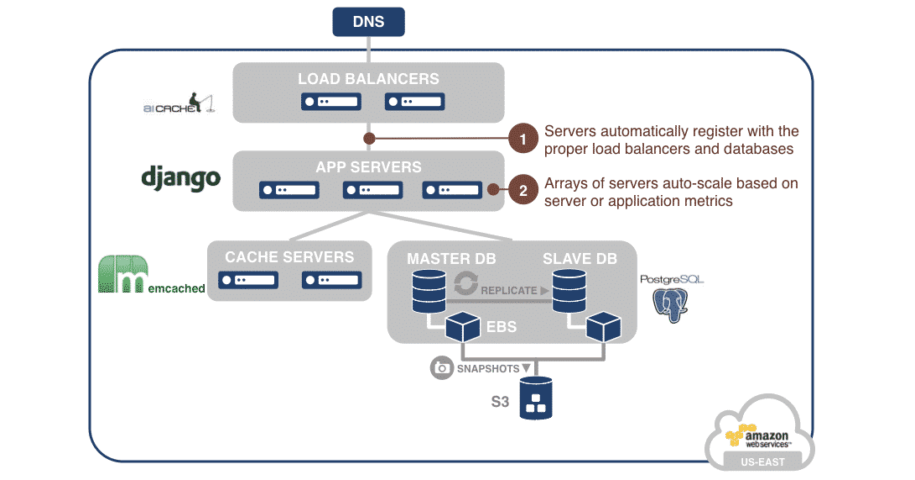

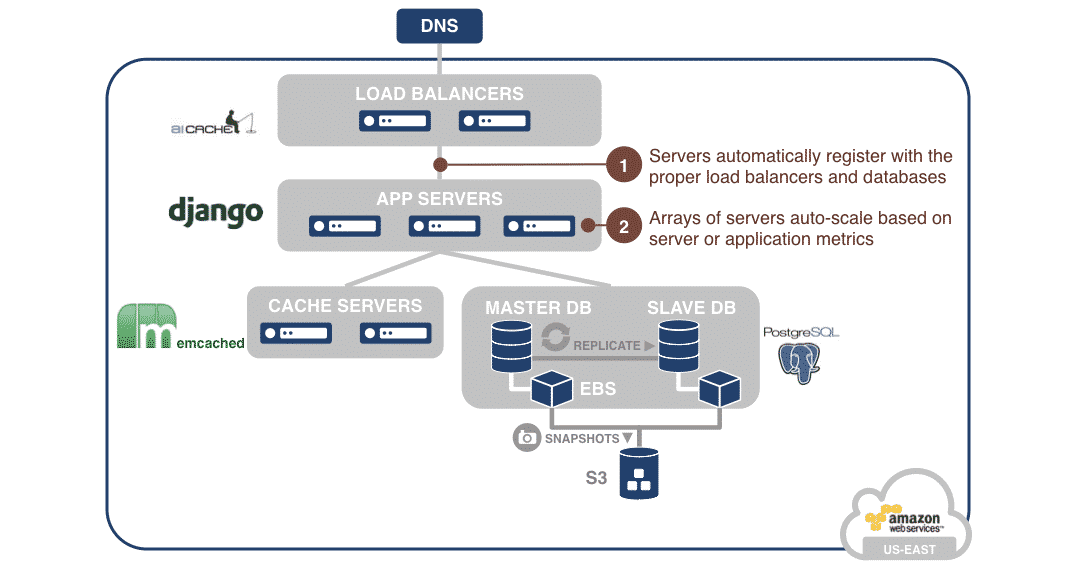

The architecture below shows a typical web application. It includes a load-balancing tier based on aiCache, an application tier running Python Django, a Memcached front end for improved performance, and a master-slave PostgreSQL database tier.

With RightScale monitoring and alerting, the Django application tier auto-scales up and down. Application activity is monitored – for example, the level of CPU use and the number of incoming requests – and the optimal number of application server is always maintained. RightScripts or Chef recipes connect the application servers to the proper load balancers and databases automatically in real time.

2. Batch Processing

Characteristics

Batch processing and tasks like encoding and decoding data streams require concentrating a great deal of processing power on huge workloads. Time is often of the essence in these jobs, so the objective is to dedicate all available resources to them, then hand off the results to another process. The need for these jobs is not constant, and their fluctuating utilization cycle often means high usage today and low usage tomorrow. So it’s not worth owning and maintaining all the infrastructure required for the spikes.

How the Cloud Helps

A cloud configuration can link metrics and auto-scaling to boost the computing capacity in a tier of encoding servers, for example, connected to a queue like RabbitMQ or even a database that stores jobs that need to be executed. The servers in the tier can encode jobs, then, based on the length of the queue and the load on each server, the system can automatically add servers to meet demand. So if there are a thousand jobs in the queue and each server can process only ten of them at a time, the system can spin up one hundred servers in the cloud to complete the task.

Either a private or a public cloud (but especially a public cloud) puts virtually unlimited computing resources at your disposal. RightScale lets you bring up large numbers of servers fast and dedicate huge numbers of servers to the task, then retire them as soon as the peak has passed.

What’s more is that renting ten servers for one hundred hours or one thousand servers for one hour costs the same from a public cloud provider. So you can get your results extraordinarily quickly if you can batch and parallelize the task, and it doesn’t cost you any more because of the utility nature of the cloud!

Architecture Example

The architecture shown below is for a mobile application that allows users to upload video. A batch process is needed to encode the video and serve it in different formats. It includes an auto-scaling Sorenson Squeeze array that performs the video encoding. This array scales based on the length of the job queue. A MySQL database maintains video meta-data.

3. Disaster Recovery

Characteristics

In case of a disaster or a problem in your production data center, disaster recovery depends on having the same set of resources at the ready in a different location and data center. You don’t want to buy and maintain an exact duplicate of your production-quality infrastructure when it’s going to sit idle most of the time, but when it is needed, you need to spin it up and cut over to it in a hurry to avoid downtime.

How the Cloud Helps

You can implement your disaster recovery plan in any public or private cloud, which gives you the advantage of geographic diversity. In the cloud, you can run a scaled-down version of your deployment at the disaster recovery site. For example, in the production data center and cloud, you might run your entire infrastructure – load balancers, servers, databases, etc. – then in the disaster recovery site you would run and pay for only a replicating slave database (or several databases if necessary to support your workload) to reduce costs.

Then, when you need to execute your disaster recovery plan, you use RightScale ServerTemplates and associated RightScripts to quickly launch the application servers and load balancers in your deployment. Again, automation tools for smoother failover help you not only when you need to get all the bits of infrastructure up and talking to one another in a disaster, but they also make it easy for you to test and enhance your disaster recovery process regularly without a huge investment in hardware.

Architecture Example

The architecture below shows a warm DR environment built to withstand a cloud failure. The production environment (left) has been cloned into a different cloud (right) to provide high availability. Redundant load balancers and applications servers are staged and can be launched in a matter of minutes. Because a database can take much longer to come online, the redundant slave database is constantly running. Database replication and backups happen automatically using RightScripts. Automation tools switch environments in the event of an emergency, launching the staged servers, connecting the tiers, failing over the database, and switching over the DNS.

Go to our website to see even more about disaster recovery using RightScale.

Workloads That Are Not a Good Fit for the Public Cloud

Just as there are some rules of thumb that can help you to identify workloads that are a great fit for IaaS cloud, there are some application requirements that can help identify workloads that are better left in their traditional infrastructure.

- High-performance applications that demand a lot of disk I/O and network throughput

Some proprietary databases and the applications that run them require very high I/O and expect a consistent ability to read to and write from the disk systems. In general, the public cloud uses shared resources, so performance varies. Most of the time it doesn’t vary too greatly, but some databases are better off in a private cloud or a dedicated hybrid configuration where certain performance characteristics can be guaranteed.

Sometimes the problem is in the application design, when developers create the app without an eye to launching it in the cloud and unintended side effects can ensue. Certain legacy and enterprise apps are a good example, since they typically aren’t designed to work in an ephemeral and dynamic environment. Don’t try to force these round workloads into the square cloud box.

- Applications that demand low latency over the network

Some databases – especially in analytics applications – require high-throughput replication and clustering, and it is still hard to deliver that throughput in the public cloud. Some organizations accustomed to high-performance network storage try to move their applications from network attached storage (NAS) or a NetApp appliance in a typical data center, and continue to use things like NFS storage in the cloud. But it’s hard to make an NFS server highly available in the cloud, and even then it won’t likely perform well. We see customers use S3 or Gluster for high-performance shared storage.

Cloud Management

Take control of cloud use with out-of-the-box and customized policies to automate cost governance, operations, security and compliance.

- Specific hardware dependencies

Not all hardware is available in the public cloud, so configurations that depend on rare or obsolete equipment are better off staying in the data center.

Develop Your Cloud Strategy

With these examples as a guide, you should be well-equipped to evaluate your portfolio of applications and decide which can benefit from the flexibility of the cloud and the automation that RightScale brings to the picture.

Better still, if you’d like to have us help you filter that list, contact RightScale and we can send you a Scoping Questionnaire that our team of engineers will be happy to evaluate.